It’s been a busy few months, but I have a few days to catch my breath now that it’s spring break and most people (except me) have gone away! One question that’s come up quite a bit this semester is how to associate raster data with coinciding vector data. I’ll summarize some approaches in this post using ArcGIS Pro and QGIS, to summarize raster values for polygons (zonal statistics) and to assign raster values to points (aka raster sampling).

Zonal Statistics: Summarize Rasters by Area

Imagine that you have quantitative values such as temperature or a vegetation index in a raster grid, and you want to use this data to calculate an average for counties or metro areas. The goal is to have a new attribute column in the vector layer that contains the summarized raster value, perhaps because you want to make thematic maps of that value, or you want to use it in conjunction with other variables to run spatial statistics, or you just want a plain and simple summary for given places.

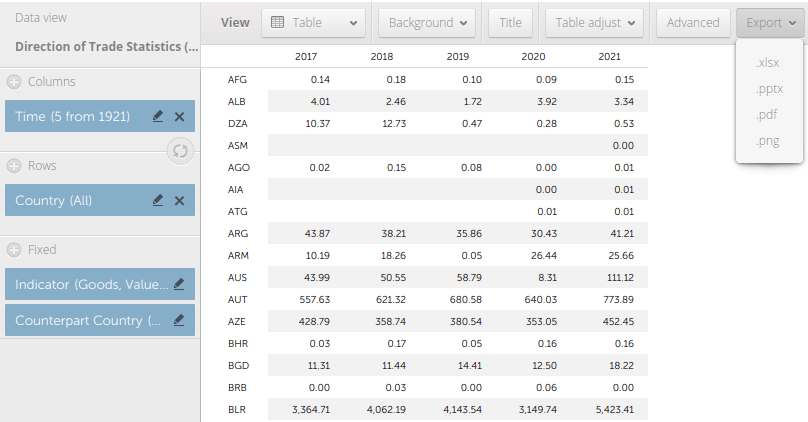

The term zonal statistics is used to define any operation that calculates statistics on cell values of a raster within an area or zone defined by another dataset, either a raster or a vector. The ArcGIS Pro toolbox has a Zonal Statistics tool where the output is a new raster file with cells that are summarized by the input zones. That’s not desirable for the use case I’m presenting here; the better choice is the Zonal Statistics as Table tool. The output is a table containing the unique identifiers of the raster and vector, the summary stats you’ve generated (average, sum, min, max, etc), and a count of the number of cells used to generate the summary. You can join this resulting table back to the vector file using their common unique identifier in a table join.

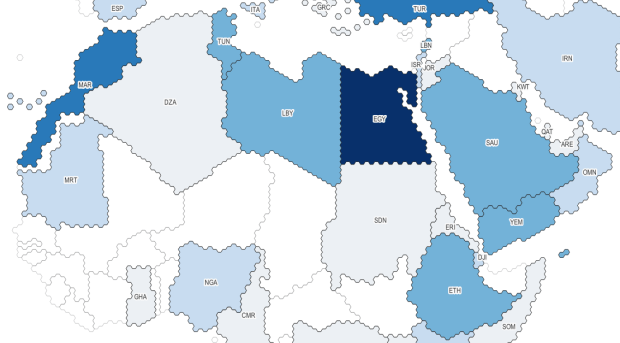

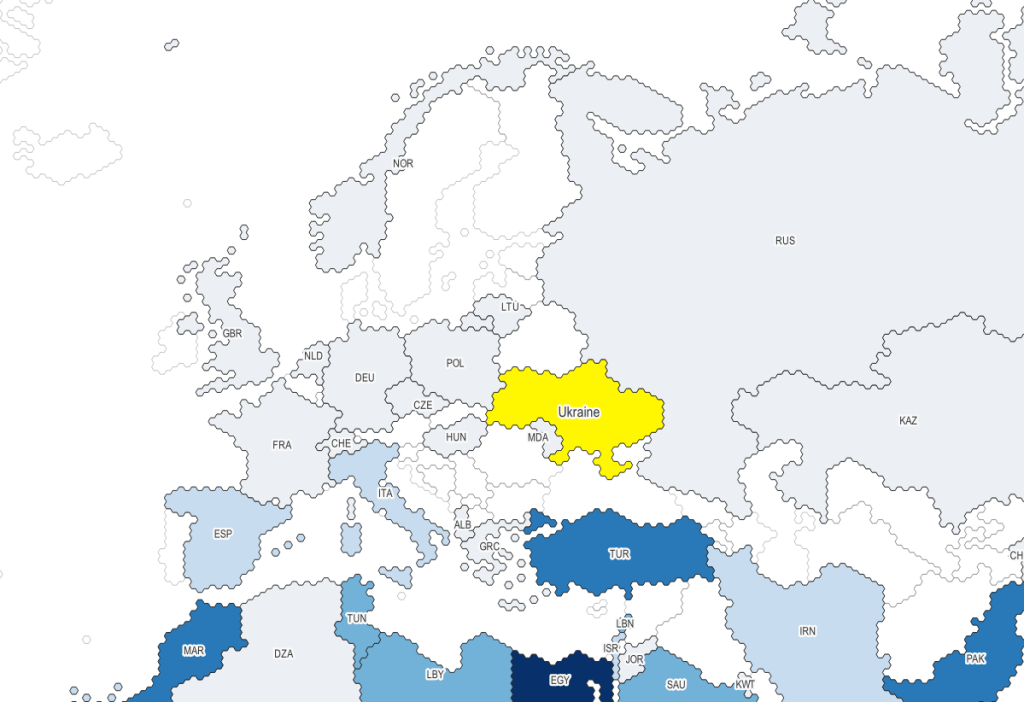

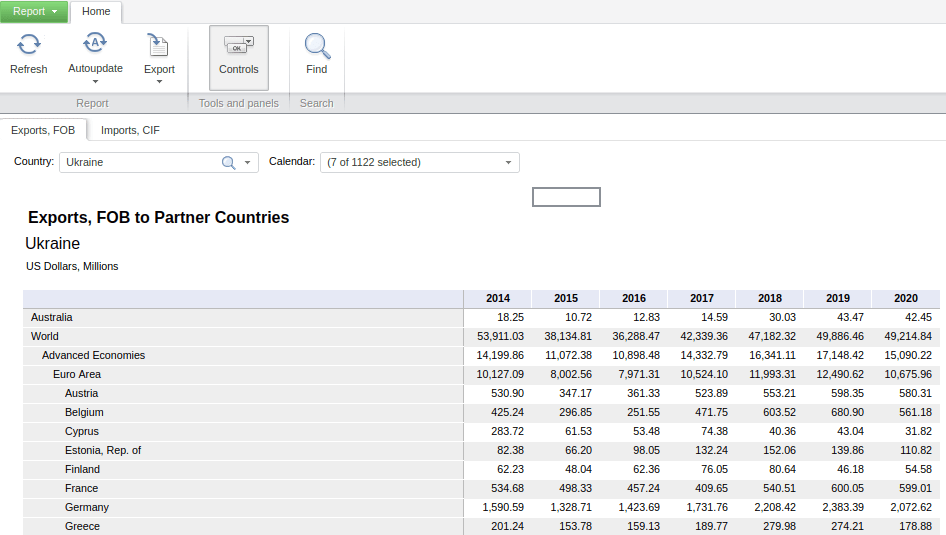

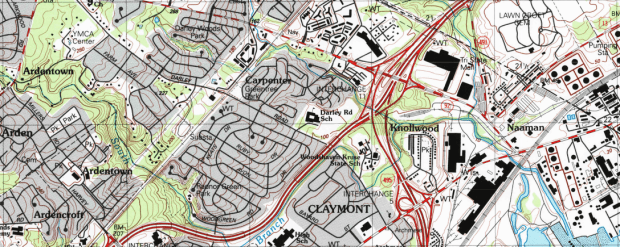

In the example below, I’m using counties from the census TIGER files for southern New England as my Input Feature Zone, the AFFGEOID (Census ANSI / FIPS code) to identify the Zone Field, and a temperature grid for January 1, 2020 from PRISM as the Input Value Raster. I’m calculating the mean temperature for the counties on that day.

The output table consists of one record for each zone / county, with the count of the cells used to create the average, and the mean temperature (in degrees Celsius). This table can be joined back to the original vector feature (select the county feature in the Contents, right click, Joins and Relates – Join) to thematically map the average temp.

In QGIS, this tool is simply called Zonal Statistics; search for it in the Processing toolbox. The vector with the zones is the Input layer, and the Raster layer is the grid with the values. By default the summary stats are the count, sum, and mean, but you can check the Statistics to calculate box to select others. Unlike ArcGIS, QGIS allows you to write output as a table or a new shapefile / geopackage, which carries along the feature geometry from the Input zones and adds the summaries, allowing you to skip the step of having to do a table join (if you opted to create a table, you could join it to the zones using the Joins tab under the Properties menu for the vector features).

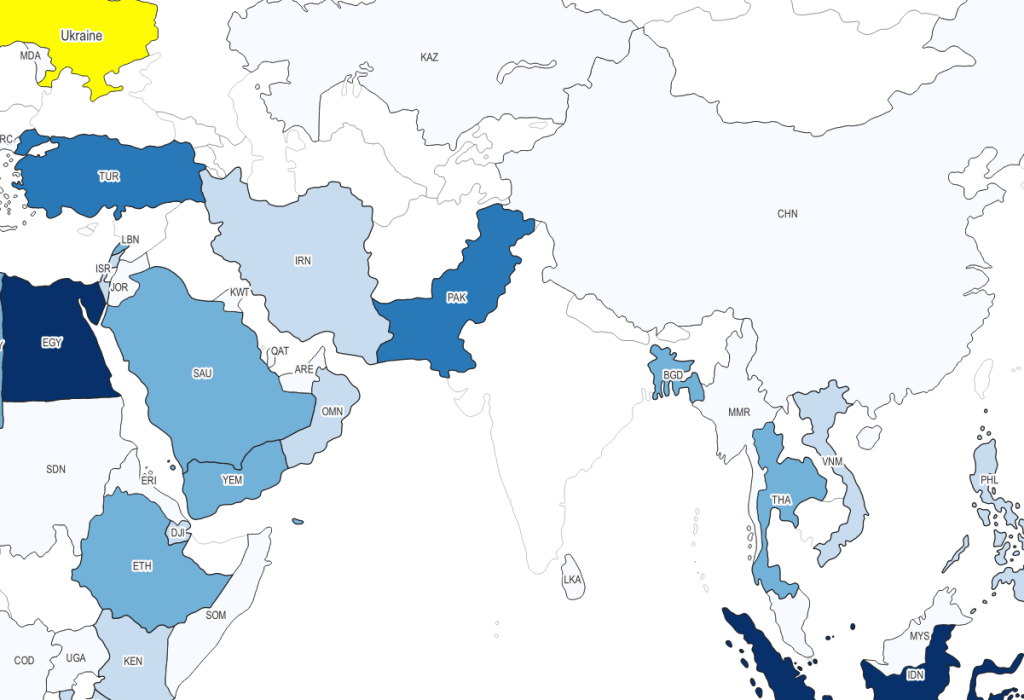

Extract Raster Values for Point Features

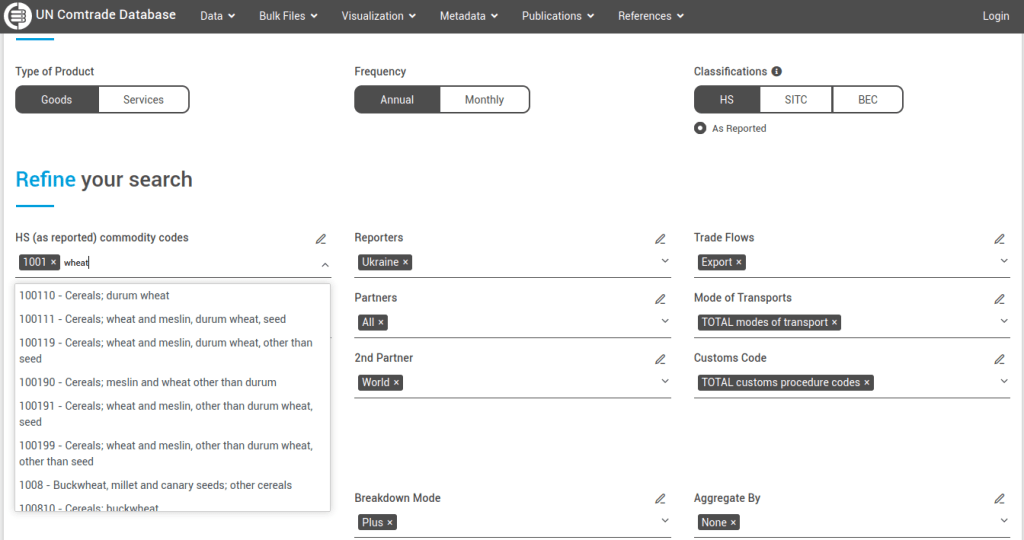

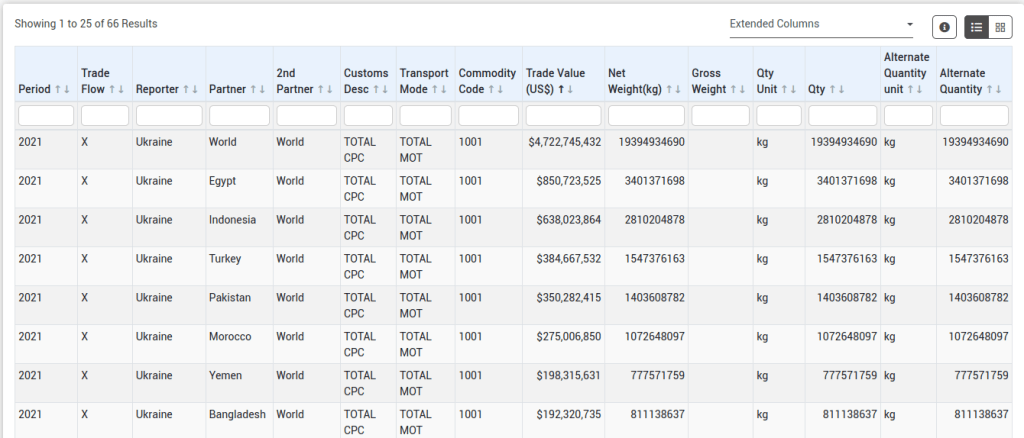

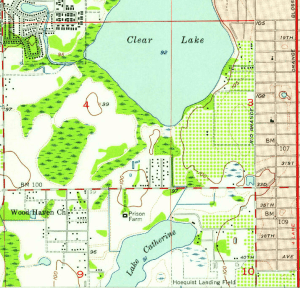

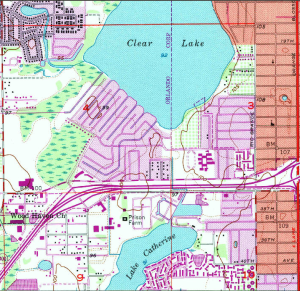

Zonal stats allows you to summarize raster data within a polygon. But what if you had point features, and wanted to assign each point the value of the raster cell that it falls within? In ArcGIS Pro, search the toolbox for the Extract Values to Points tool. You select your input points and raster, and a new point feature that will include the raster values. The default is to take the value for the cell that the point falls within, but there is an Interpolate option that will calculate the value from adjacent cells. The output point feature contains a new column called RASTERVALU. I created some phony point data and used it to generate the output below.

In QGIS the name of this tool is Sample raster values, which you can find in the Processing toolbox. Input the points, choose a raster layer, and write the output to a new vector point file. Unlike ArcGIS, there isn’t an option for interpolation from surrounding cells; you simply get the value for the cell that the point falls within. If you needed to interpolate, you can go to the Plugins menu, enable the SAGA plugin, and in the Processing toolbox try the SAGA tool Raster Values to Points instead.

A variation on this theme would be to create and assign an average value around each point at a given distance, such as the average temperature within five miles. One way to achieve this would be to use the buffer tools in either ArcGIS or QGIS to create distinct buffers around each point at the specified distance. The buffer will automatically carry over all the attributes from the point features, including unique identifiers. Then you can run the zonal statistics tools against the buffer polygons and raster to compute the average, and if need be do a table join between the output table and the original point layer using their common identifier.

Wrap-up

In using any of these tools, it’s important to consider the resolution of the raster (i.e. the size of the grid cell):

1. Relative to the size of the zonal areas or number of points, and

2. In relation to the phenomena that you’re studying.

When larger grid cells or zonal areas are used for measurement, any phenomena becomes more generalized, and any variations within these large areas become masked. The temperature grid cells in this example had a resolution of 2.5 miles, which was suitable for creating county summaries. Summarizing data for census tracts at this resolution would be less ideal, as the tracts are much smaller than the cells, with the cell value characterizing a much larger area. This might be okay in the case of temperature, which tends not to vary considerably over a distance of a few miles. In contrast, averaging temperature data for states is not worthwhile, as states vary considerably in size and most are large enough that they contain multiple ecosystems and elevation levels.

The solutions I’ve described here are the desktop GIS solutions. You could also use either spatial SQL in a geodatabase or a spatial extension in a scripting language like Python or R to perform similar operations. In both cases a basic overlay and intersection statement is used, in conjunction with some grouping function for calculating summaries. I’ve been doing a lot more spatial Python work with geopandas these past few months – perhaps a topic for a subsequent post…

You must be logged in to post a comment.